Voice input often appears effortless: you speak, the system reacts. In practice, turning raw microphone data into reliable, intention-aware actions is one of the messier problems in real-time interaction design - especially on standalone headsets, where latency, performance, and memory constraints are unforgiving.

This post walks through the architecture of a speech-to-action system we built in Unity. It is not about a single speech-to-text API call, but about the layers around it: how speech is detected in the first place, how intent is separated from background chatter, and how imperfect recognition results are turned into something users can still rely on.

The goal was a system that feels natural, works offline, degrades gracefully when parts fail, and remains understandable to debug when something goes wrong.

About the Idea

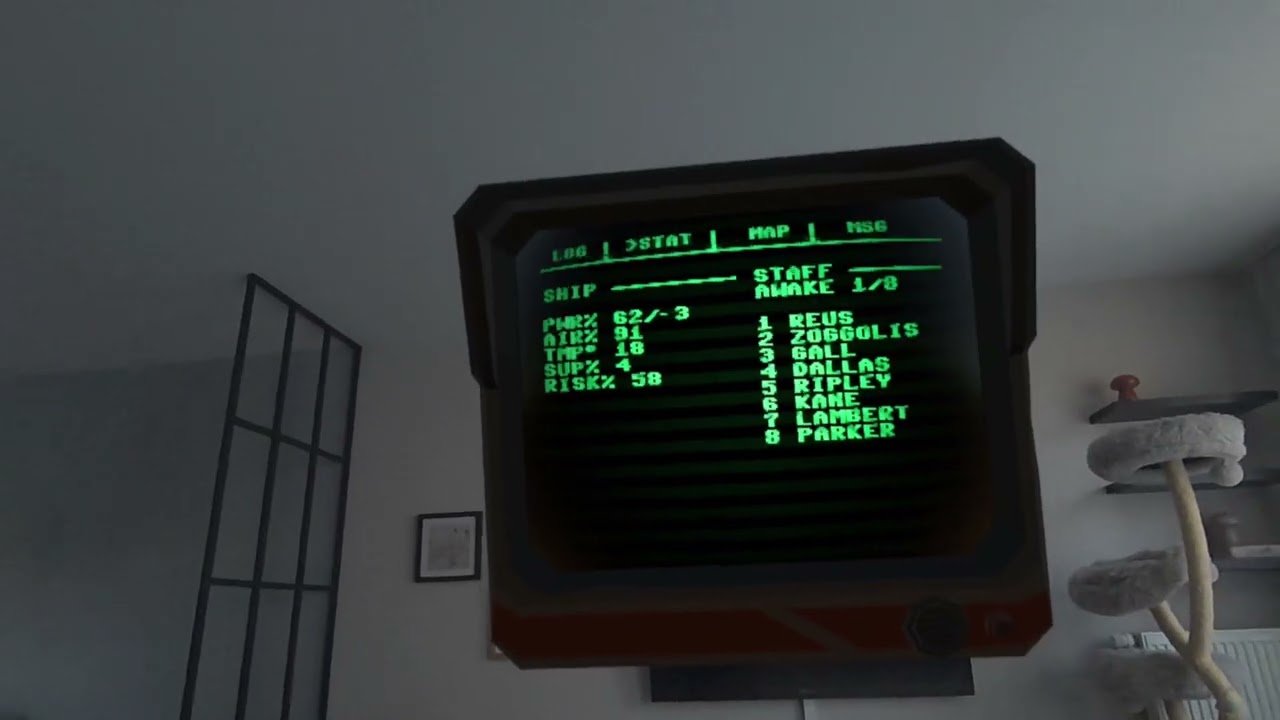

The voice command system was built for a specific Mixed Reality game where players view a diorama-like spacecraft scene with no traditional controls. Only voice commands work.

Players take the role of the ship's AI and encounter a crew member emerging from cryo-sleep aboard a hibernating deep-space vessel. The gameplay is asymmetric: players never act directly but instead manipulate ship systems like heating, doors, and supplies through voice. The crew member responds to these environmental changes: opening a door prompts exploration, while an unheated room eventually leads to demise.

This creates strategic tension as players balance power, temperature, and access to ensure both survival and forward progress, with voice as the sole interface and crew behavior as the feedback mechanism.

Here's a video describing the whole experience:

Clicking play will embed the YouTube player and may set third-party cookies and collect data from YouTube.

From Audio to Intent

Viewed from the outside, the pipeline can be summarized quickly: microphone input goes in, actions come out. The complexity hides in everything in between. Raw audio is continuous, noisy, and mostly irrelevant. Commands are sparse, intentional, and need to be interpreted quickly.

To keep the system responsive, the flow is split into a few conservative stages:

- detect whether there is speech at all (VAD)

- recognize and transcribe the speech (speech-to-text)

- decide whether the user is addressing the system (wake word)

- interpret intent and map it to scene actions (parsing + matching)

Expensive work only happens when cheaper checks suggest it is worth doing.

Speech Recognition vs Transcription

In this project, it helps to separate two things that often get lumped together under "Active Speech Recognition" (short: "ASR").

First, there is speech recognition in the literal sense: detecting whether a user is speaking at all, and carving the audio stream into meaningful speech segments. Second, there is transcription: turning those detected speech segments into text. Both parts have to cope with accents, pacing, incomplete words, background noise, and the fact that spoken language rarely follows clean grammatical boundaries.

Transcription is treated as a streaming process. Partial results arrive while the user is still speaking, and a final result is emitted once speech ends. Partial results are fast and noisy; final results are slower but more reliable. Both are useful.

Partial transcription is primarily used for feedback and wake word detection. Final transcription is what actually drives command execution.

Why Voice Activity Detection Comes First

Running speech recognition continuously on raw microphone input is wasteful. Most of the time, nobody is speaking. Even when they are, it might not be directed at the system.

Voice Activity Detection (VAD) acts as the first gate. It looks at audio and answers a narrow question: is there human speech right now?

The primary implementation uses a small neural-network-based VAD (TEN-VAD). It is trained specifically to distinguish speech from non-speech, even when background noise is present. Instead of reacting to volume alone, it recognizes speech-like patterns, which dramatically reduces false activations.

We used the "unity-asr" project on GitHub as a starting point. This repository implements ASR based on TEN-VAD and speech to text using Vosk and Wav2Vec2. However, we experienced several issues when trying this directly on a standalone headset like the Meta Quest 3. E.g., the original library included in the ASR Unity project generated page alignment warnings and caused crashes on Meta Quest, therefore we switched to the updated libten_vad.so as per this commit.

To mitigate this and other unexpected issues, the system always includes a fallback.

When TEN‑VAD is unavailable or misbehaving, it switches automatically to a simple energy-based detector that measures signal power via RMS (Root Mean Square, a simple measure of average signal energy over time). This fallback is far less accurate, but it works everywhere and ensures the system never completely breaks.

In both variants, the output is intentionally small: a speech / no-speech decision plus a confidence score you can threshold. If not, the rest of the pipeline stays idle.

In practice, the trade-off is simple:

- TEN-VAD is better at ignoring "loud but not speech" noise

- energy-based detection is harder to foolproof, but nearly impossible to break

Offline Speech-to-Text with Vosk

For speech-to-text transcription, the system uses Vosk, an offline speech-to-text engine. This decision was largely driven by constraints common in standalone headsets and similar embedded contexts: unreliable connectivity, tight latency budgets, and a strong desire to keep audio data on-device. Another practical factor is model size: in testing, both the English and German language models come in at roughly 50 MB each, which makes them easy to ship, load, and iterate on even in memory-constrained environments.

Offline recognition removes an entire class of failure modes. There are no network round trips, no API quotas, and no silent outages. Latency is predictable, and privacy concerns are easier to reason about.

The reference implementation did all the work on the main thread, which impacted the application on a Meta Quest 3. Therefore, in our Unity implementation, Vosk runs on a background worker thread. Audio capture and state management remain on the main thread, while recognition happens asynchronously. Results are passed back through thread-safe queues, keeping frame times stable even during longer utterances.

Wake Words and Intentional Interaction

One of the most important design decisions is that the system does not treat all speech as commands. A wake word defines intent.

While ASR is running, partial transcription results are scanned for configured wake words. When one is detected, the system switches into a listening mode. Only then does it start accumulating speech into a command.

Any text before the wake word is discarded. Anything after it becomes part of the command. From the user's perspective, this feels natural: say the wake word, then speak normally.

Listening mode is time-bound. If no further speech is detected after a configurable interval, the system assumes the command is complete and moves on to interpretation. This avoids awkward "are you still there?" states and keeps interaction snappy.

Turning Text into Commands

Once a final transcription is available, the system parses it into structure. Commands follow a simple mental model: an action, an optional target, and an optional argument.

- "Activate door."

- "Ping system three."

- "Restart."

The parser normalizes the text, splits it into tokens, and assigns roles based on position and type. Numbers are interpreted as arguments when possible. Everything else is resolved against known verbs and objects.

This simplicity is intentional. Voice commands should be predictable to debug and easy to extend without introducing a full natural-language grammar.

Dealing with Imperfect Recognition

Speech recognition makes mistakes. To compensate, the system uses fuzzy matching based on Levenshtein distance. Instead of requiring exact matches, it measures how many single-character edits separate the recognized word from known commands or object names.

Thresholds scale with word length. Short words are treated more strictly to avoid accidental matches. Longer words can tolerate more errors without becoming ambiguous.

This allows text like "show lock" to resolve correctly to "show log" without the user ever noticing that recognition was imperfect.

Exact matches are always preferred. Fuzzy matching only activates when necessary, keeping performance predictable and avoiding surprising interpretations.

Aliases and Natural Language Flexibility

People rarely use the same words consistently. One user says "door", another says "entrance", a third says "gate". All of them mean the same thing.

The system supports aliases at every level: verbs, objects, and even parameter values. All aliases resolve to a single canonical key internally, so execution logic stays clean and deterministic.

Fuzzy matching applies to aliases as well, which means recognition errors and vocabulary differences are handled by the same mechanism.

The result is a system that feels flexible to speak to, but rigid and predictable under the hood.

States, Errors, and Degradation

Internally, the system moves through a small set of explicit states: idle, speaking, listening, and processing. Each state has clear responsibilities and exit conditions.

Errors are treated as part of normal operation. Ambiguous targets are reported back to the user instead of guessed. Unknown commands do not crash the pipeline; they simply keep the system listening.

If VAD fails, the fallback activates. If ASR encounters an error, it is logged and isolated. The goal is always the same: keep the system responsive, even when individual components misbehave.

Closing Thoughts

What makes voice interaction hard is not speech recognition alone, but everything around it: knowing when to listen, when to ignore, and how to recover gracefully when things are unclear.

By layering cheap detection before expensive processing, using offline recognition, and embracing fuzzy matching instead of fighting it, this system ends up feeling far more reliable than its individual components would suggest.

Most importantly, it stays understandable. When something goes wrong, there is a clear place to look. And that matters just as much as recognition accuracy when you are building real-time interactive systems!

Work with Us

Voice-only interaction isn't just interesting for games. It can work really well for training, simulations, accessibility, or any situation where hands-free interaction makes sense.

If you're working on something like this, or have an idea where voice or XR could be useful, feel free to reach out. We're always happy to explore ideas and build prototypes together.